Index of / /uploads/*.mp4*|.img*|.jpg*|.avi*| ( Sextape )

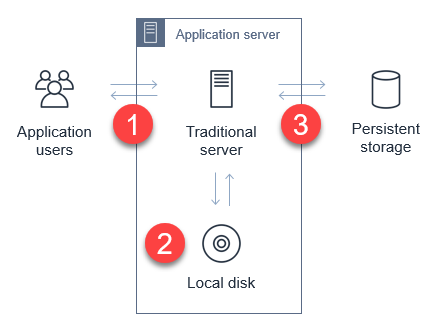

In web and mobile applications, it's mutual to provide users with the ability to upload data. Your application may allow users to upload PDFs and documents, or media such as photos or videos. Every modern web server technology has mechanisms to allow this functionality. Typically, in the server-based environment, the procedure follows this menstruation:

- The user uploads the file to the application server.

- The application server saves the upload to a temporary space for processing.

- The awarding transfers the file to a database, file server, or object store for persistent storage.

While the process is simple, it can have significant side-furnishings on the performance of the web-server in busier applications. Media uploads are typically large, and so transferring these can represent a large share of network I/O and server CPU fourth dimension. You lot must as well manage the state of the transfer to ensure that the entire object is successfully uploaded, and manage retries and errors.

This is challenging for applications with spiky traffic patterns. For example, in a web application that specializes in sending holiday greetings, it may feel most traffic merely around holidays. If thousands of users effort to upload media around the same fourth dimension, this requires yous to calibration out the application server and ensure that in that location is sufficient network bandwidth bachelor.

By directly uploading these files to Amazon S3, you lot can avoid proxying these requests through your application server. This can significantly reduce network traffic and server CPU usage, and enable your application server to handle other requests during busy periods. S3 as well is highly bachelor and durable, making it an ideal persistent store for user uploads.

In this blog mail, I walk through how to implement serverless uploads and show the benefits of this approach. This design is used in the Happy Path web application. You lot tin can download the code from this blog postal service in this GitHub repo.

Overview of serverless uploading to S3

When yous upload directly to an S3 bucket, you must first request a signed URL from the Amazon S3 service. Yous tin can then upload directly using the signed URL. This is two-footstep procedure for your application front cease:

- Phone call an Amazon API Gateway endpoint, which invokes the getSignedURL Lambda function. This gets a signed URL from the S3 saucepan.

- Directly upload the file from the application to the S3 bucket.

To deploy the S3 uploader example in your AWS business relationship:

- Navigate to the S3 uploader repo and install the prerequisites listed in the README.doctor.

- In a terminal window, run:

git clone https://github.com/aws-samples/amazon-s3-presigned-urls-aws-sam

cd amazon-s3-presigned-urls-aws-sam

sam deploy --guided

- At the prompts, enter s3uploader for Stack Name and select your preferred Region. In one case the deployment is consummate, note the APIendpoint output.The API endpoint value is the base of operations URL. The upload URL is the API endpoint with

/uploadsappended. For instance:https://ab123345677.execute-api.united states-due west-2.amazonaws.com/uploads.

Testing the application

I show two ways to test this application. The first is with Postman, which allows you to direct call the API and upload a binary file with the signed URL. The 2d is with a basic frontend application that demonstrates how to integrate the API.

To test using Postman:

- First, copy the API endpoint from the output of the deployment.

- In the Postman interface, paste the API endpoint into the box labeled Enter request URL.

- Choose Send.

- After the request is complete, the Body section shows a JSON response. The uploadURL aspect contains the signed URL. Copy this aspect to the clipboard.

- Select the + icon next to the tabs to create a new request.

- Using the dropdown, change the method from GET to PUT. Paste the URL into the Enter request URL box.

- Choose the Body tab, then the binary radio button.

- Choose Select file and choose a JPG file to upload.

Choose Send. You encounter a 200 OK response later on the file is uploaded.

- Navigate to the S3 console, and open the S3 saucepan created past the deployment. In the bucket, yous see the JPG file uploaded via Postman.

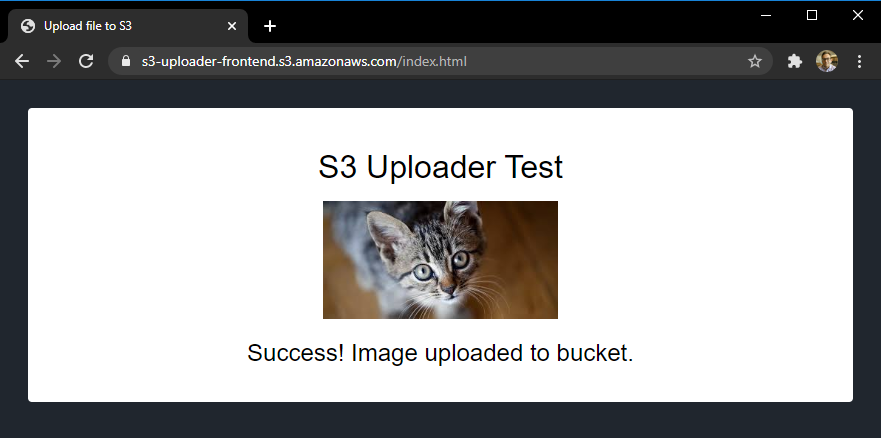

To test with the sample frontend awarding:

- Copy index.html from the case'south repo to an S3 bucket.

- Update the object'due south permissions to make it publicly readable.

- In a browser, navigate to the public URL of index.html file.

- Select Choose file and so select a JPG file to upload in the file picker. Choose Upload prototype. When the upload completes, a confirmation message is displayed.

- Navigate to the S3 console, and open the S3 saucepan created by the deployment. In the saucepan, you come across the second JPG file you lot uploaded from the browser.

Understanding the S3 uploading process

When uploading objects to S3 from a web application, you must configure S3 for Cross-Origin Resource Sharing (CORS). CORS rules are defined equally an XML certificate on the bucket. Using AWS SAM, you lot tin configure CORS as function of the resource definition in the AWS SAM template:

S3UploadBucket: Type: AWS::S3::Bucket Properties: CorsConfiguration: CorsRules: - AllowedHeaders: - "*" AllowedMethods: - Go - PUT - HEAD AllowedOrigins: - "*" The preceding policy allows all headers and origins – information technology's recommended that you employ a more restrictive policy for production workloads.

In the first step of the process, the API endpoint invokes the Lambda function to make the signed URL request. The Lambda function contains the following code:

const AWS = require('aws-sdk') AWS.config.update({ region: process.env.AWS_REGION }) const s3 = new AWS.S3() const URL_EXPIRATION_SECONDS = 300 // Master Lambda entry point exports.handler = async (event) => { return await getUploadURL(consequence) } const getUploadURL = async function(event) { const randomID = parseInt(Math.random() * 10000000) const Key = `${randomID}.jpg` // Get signed URL from S3 const s3Params = { Bucket: process.env.UploadBucket, Key, Expires: URL_EXPIRATION_SECONDS, ContentType: 'image/jpeg' } const uploadURL = await s3.getSignedUrlPromise('putObject', s3Params) render JSON.stringify({ uploadURL: uploadURL, Key }) } This function determines the name, or key, of the uploaded object, using a random number. The s3Params object defines the accepted content blazon and also specifies the expiration of the primal. In this case, the key is valid for 300 seconds. The signed URL is returned equally role of a JSON object including the fundamental for the calling application.

The signed URL contains a security token with permissions to upload this single object to this bucket. To successfully generate this token, the code calling getSignedUrlPromise must have s3:putObject permissions for the saucepan. This Lambda function is granted the S3WritePolicy policy to the saucepan by the AWS SAM template.

The uploaded object must match the same file proper name and content type every bit defined in the parameters. An object matching the parameters may be uploaded multiple times, providing that the upload process starts before the token expires. The default expiration is 15 minutes simply yous may want to specify shorter expirations depending upon your use case.

Once the frontend awarding receives the API endpoint response, it has the signed URL. The frontend application then uses the PUT method to upload binary data direct to the signed URL:

let blobData = new Blob([new Uint8Array(array)], {type: 'prototype/jpeg'}) const result = await fetch(signedURL, { method: 'PUT', trunk: blobData }) At this point, the caller application is interacting directly with the S3 service and not with your API endpoint or Lambda part. S3 returns a 200 HTML condition lawmaking once the upload is complete.

For applications expecting a large number of user uploads, this provides a simple mode to offload a large amount of network traffic to S3, away from your backend infrastructure.

Calculation authentication to the upload process

The current API endpoint is open, available to whatsoever service on the net. This means that anyone can upload a JPG file once they receive the signed URL. In most production systems, developers desire to utilize authentication to control who has access to the API, and who can upload files to your S3 buckets.

You can restrict access to this API past using an authorizer. This sample uses HTTP APIs, which support JWT authorizers. This allows y'all to control access to the API via an identity provider, which could be a service such as Amazon Cognito or Auth0.

The Happy Path application simply allows signed-in users to upload files, using Auth0 as the identity provider. The sample repo contains a 2d AWS SAM template, templateWithAuth.yaml, which shows how you can add an authorizer to the API:

MyApi: Blazon: AWS::Serverless::HttpApi Backdrop: Auth: Authorizers: MyAuthorizer: JwtConfiguration: issuer: !Ref Auth0issuer audition: - https://auth0-jwt-authorizer IdentitySource: "$request.header.Authorization" DefaultAuthorizer: MyAuthorizer Both the issuer and audience attributes are provided by the Auth0 configuration. By specifying this authorizer as the default authorizer, information technology is used automatically for all routes using this API. Read role 1 of the Enquire Around Me series to learn more than about configuring Auth0 and authorizers with HTTP APIs.

Subsequently authentication is added, the calling spider web awarding provides a JWT token in the headers of the asking:

const response = expect axios.get(API_ENDPOINT_URL, { headers: { Say-so: `Bearer ${token}` } }) API Gateway evaluates this token before invoking the getUploadURL Lambda role. This ensures that only authenticated users can upload objects to the S3 bucket.

Modifying ACLs and creating publicly readable objects

In the current implementation, the uploaded object is not publicly accessible. To brand an uploaded object publicly readable, you must set its access command list (ACL). In that location are preconfigured ACLs available in S3, including a public-read option, which makes an object readable by anyone on the internet. Prepare the appropriate ACL in the params object before calling s3.getSignedUrl:

const s3Params = { Bucket: process.env.UploadBucket, Key, Expires: URL_EXPIRATION_SECONDS, ContentType: 'paradigm/jpeg', ACL: 'public-read' } Since the Lambda function must have the appropriate bucket permissions to sign the asking, you must also ensure that the part has PutObjectAcl permission. In AWS SAM, you can add the permission to the Lambda function with this policy:

- Statement: - Consequence: Let Resource: !Sub 'arn:aws:s3:::${S3UploadBucket}/' Action: - s3:putObjectAcl Decision

Many web and mobile applications allow users to upload information, including large media files like images and videos. In a traditional server-based application, this tin can create heavy load on the application server, and as well apply a considerable amount of network bandwidth.

By enabling users to upload files to Amazon S3, this serverless pattern moves the network load away from your service. This can make your awarding much more scalable, and capable of handling spiky traffic.

This blog postal service walks through a sample application repo and explains the process for retrieving a signed URL from S3. It explains how to the test the URLs in both Postman and in a web awarding. Finally, I explain how to add authentication and make uploaded objects publicly attainable.

To acquire more than, see this video walkthrough that shows how to upload straight to S3 from a frontend web application. For more serverless learning resources, visit https://serverlessland.com.

Source: https://aws.amazon.com/blogs/compute/uploading-to-amazon-s3-directly-from-a-web-or-mobile-application/

0 Response to "Index of / /uploads/*.mp4*|.img*|.jpg*|.avi*| ( Sextape )"

ارسال یک نظر